GitHub Copilot

If you have a GitHub Copilot subscription, you can add it as a provider in aivo. (Be aware of the GitHub Copilot charges by request count, not by token usage)

# choose GitHub Copilot

aivo keys add

Aivo is a command-line tool that connects your coding agent to almost any model. It also includes built-in models out of the box — no API keys, no signup.

$ Install aivo with script or package managers.

curl -fsSL https://getaivo.dev/install.sh | bash irm https://getaivo.dev/install.ps1 | iex brew install yuanchuan/tap/aivo npm install -g @yuanchuan/aivo Start with the built-in provider. Add your own when you're ready.

aivo claude Add API keys from any provider you trust. Keys are encrypted and stored locally, so you can manage them and switch anytime.

aivo keys add The picker covers most providers. A few work differently:

If you have a GitHub Copilot subscription, you can add it as a provider in aivo. (Be aware of the GitHub Copilot charges by request count, not by token usage)

# choose GitHub Copilot

aivo keys add

Only useful when you want to switch between different accounts.

# choose ChatGPT, Claude, or Gemini from the picker

aivo keys add

With aivo, you can easily connect to Ollama and use models from your local machine or its cloud service. If the model is not present, aivo asks to pull it first before use.

# choose Ollama

aivo keys add

# chat with ollama model

aivo chat -m ministral-3:3b

You can use other tools like LM Studio or llama.cpp by adding their local API endpoints as providers.

# list all saved keys

aivo keys

# quickly add a key with options

aivo keys add --base-url https://openrouter.ai/api --key sk-xxx

# activate a key to use

aivo keys use

aivo keys use mykey

# print saved data of a key

aivo keys cat

# edit a saved key

aivo keys edit

# health-check

aivo keys ping

# for more options

aivo keys --help

Launch a coding agent with any provider or model you want. All extra arguments are passed through to the underlying tool.

aivo claude

aivo codex

aivo gemini

aivo opencode

aivo amp

aivo pi

# all extra args pass through

aivo claude --dangerously-skip-permissions

aivo claude --resume 16354407-050e-4447-a068-4db222ff841

aivo claude -k openrouter

aivo claude -k copilot

aivo claude -k

Once a model is applied, aivo claude remembers it and reuses the same model on the next run if no --model option is given.

aivo claude -m moonshotai/kimi-k2.5

# Omit the value to open the model picker.

aivo claude -m

# Select a key and model in one go

aivo claude -k

Without a tool name, aivo run remembers your last key and tool selection,

so next time it skips the prompts and goes straight to launching.

aivo run

Claude only. Append the canonical [1m]/[2m] suffix to the resolved model

so Claude Code uses the long-context window.

aivo claude -m deepseek-v4-pro --1m

aivo claude -m grok-4.20-reasoning --2m

--debug writes every upstream request and response to a JSONL file.

Pass a path to override the default location.

aivo claude --debug

aivo claude --debug=/tmp/aivo-http.jsonl

Once you add a provider and set up the key,

you can use the models command to see the available models the provider offers.

Most providers provide the model list through API, so aivo fetches it on demand and caches it for later use.

aivo models

aivo models -k openrouter

# search by name

aivo models -s minimax

# force refresh the cached list

aivo models --refresh

If a model name is too long, you can give it a short alias.

Model aliases are accepted anywhere -m/--model works.

aivo alias fast=claude-haiku-4-5

aivo alias mimo xiaomi/mimo-v2-pro

# use it

aivo claude -m fast

aivo chat -k vercel -m mimo

# list and remove

aivo alias

aivo alias rm fast

Bundle a tool and its flags into a named preset.

When the first arg after the alias name is a known tool

(claude, codex, gemini, opencode, amp, pi),

the alias becomes a launch shortcut — invoke it via aivo run <name> or just aivo <name>.

aivo alias quick claude --key work --model fast --1m

# run it

aivo quick

# override individual flags inline — explicit user flags win

aivo quick -k personal

aivo quick -m other

A launch alias's --model can itself reference a model alias —

quick --model fast resolves through fast to the underlying model.

Talk to any model directly from your terminal. Full-screen TUI for conversations, or one-shot mode for quick answers and shell pipelines.

Interactive chat in your terminal with streaming and markdown rendering. The selected model is remembered per saved key.

aivo chat

aivo chat -m gpt-5.4

# open model picker

aivo chat -m

# open key and model pickers if you forget the names

aivo chat -km

Send a single prompt and exit. When -x has a message, piped stdin is appended as context.

When -x has no message, the entire stdin becomes the prompt.

aivo -x "pro tips for git"

# type interactively, Ctrl-D to send

aivo -x

Pipe anything into aivo. It reads stdin, adds your prompt, and sends it to the model.

git diff | aivo -x "Write a commit message"

cat error.log | aivo -x "Find the root cause"

cat error.log | aivo -x

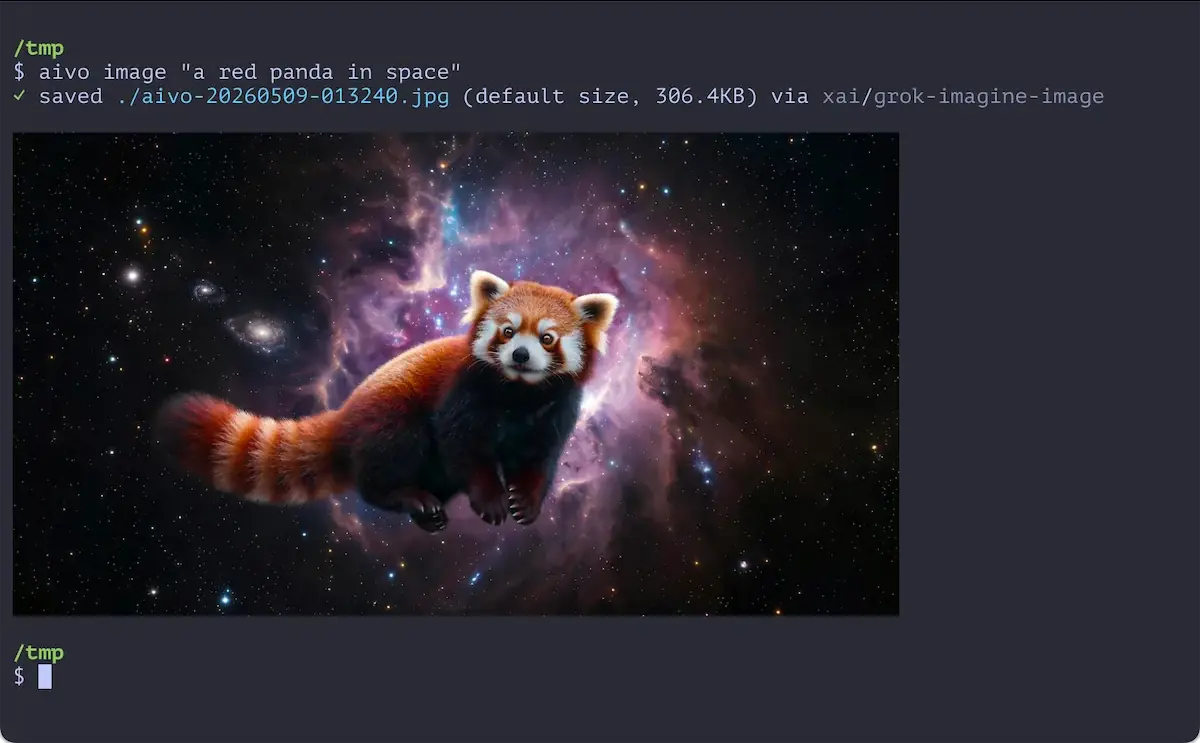

Generate images, video, and speech against your active provider's media APIs. These commands are experimental — flags and behavior may change.

Generate images from a text prompt

(gpt-image-1, dall-e-3, Gemini image models, etc.).

Inline preview shows up in Kitty, Ghostty, WezTerm, Warp, and iTerm2.

aivo image "a red panda in space"

Sizes: 1024x1024, 1792x1024, 1024x1792.

Quality: standard, hd, high, low.

aivo image "..." -o ./out/{ts}-{model}.png

aivo image "..." --size 1792x1024 --quality hd

aivo image "..." --no-preview

aivo image "..." --json

Generate videos from a text prompt

(sora-2, veo-3.0-generate-preview, etc.).

The job runs async; aivo polls until it finishes.

aivo video "a corgi running on a beach at sunset"

aivo video "city timelapse" -m sora-2 --seconds 8 -s 1920x1080

If the polling times out (or you hit Ctrl+C), reattach to the same job with --job-id.

aivo video "..." --seed 42

aivo video "..." --timeout 1200

aivo video --job-id video_abc123

aivo video "..." --json

Turn text into speech and play it

(tts-1, tts-1-hd, etc.).

Reads from a positional prompt, -f <path>, or piped stdin.

Output is cached — rerunning the same prompt replays from cache.

aivo speak "hello world"

aivo speak "narration line" -m tts-1-hd --voice nova

echo "hi from pipe" | aivo speak

aivo speak -f script.txt Replay or delete previously generated audio.

aivo speak --list Expose your active provider as a local OpenAI-compatible endpoint. Any tool that speaks the OpenAI API can use it — VS Code extensions, Cursor, Python scripts, anything.

aivo serve

aivo serve --port 8080

aivo serve --host 0.0.0.0

If a request hits a rate limit (429) or server error (5xx), aivo retries with the next saved key automatically.

aivo serve --failover

Log every request and response. Pipe to jq for readable output, or write to a file.

aivo serve --log | jq .

aivo serve --log /tmp/requests.jsonl

aivo serve --auth-token

aivo serve --auth-token my-secret

aivo serve --cors

aivo serve --timeout 60

curl http://localhost:24860/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{"model": "gpt-4o", "messages": [{"role": "user", "content": "hello"}]}' Every request is logged to a local SQLite database — chat, agent launches, serve. Token counts, models, response times, errors. All queryable from the command line.

aivo logs

Narrow by source, tool, or text. --errors shows only failures.

aivo logs --by chat -n 5

aivo logs --by claude --errors

aivo logs -s "rate limit"

aivo logs --json

Tail logs in real time. New entries appear as they happen.

aivo logs --watch

aivo logs --watch --jsonl

aivo logs --by run --watch

aivo logs show 7m2q8k4v9cpr

aivo logs status

Aggregates token counts from aivo chat, Claude Code, Codex, Gemini, OpenCode, Amp, and Pi by reading each tool's native data files.

aivo stats

aivo stats claude

aivo stats chat

# raw numbers for scripts

aivo stats -n

# filter by provider

aivo stats -s openrouter

# last N units (m, h, d, w)

aivo stats --since 7d

aivo stats claude --since 24h

# show all models

aivo stats -a

# bypass cache

aivo stats -r

$ aivo stats

────────────────────────────────────────────────────

408M tokens · 14B cached · 5.0K sessions · 77 models

By tool sessions tokens

claude 4.2K 295M ████████████████████

codex 256 87M █████▉

opencode 166 10M ▊

chat 91 8.0M ▌

gemini 204 4.2M ▎

pi 85 3.8M ▎

By model tokens

gpt-5.4 75M ████████████████████

minimax-m2.5 63M ████████████████▊

claude-sonnet-4.6 53M ██████████████▏

claude-opus-4.6 40M ██████████▋

minimax-m2.7 38M ██████████▏

claude-opus-4.7 31M ████████▏

laguna-m.1 22M █████▉

claude-haiku-4-5-20251001 14M ███▉

kimi-k2.5:cloud 11M ██▉

claude-sonnet-4.5 6.6M █▊

gpt-5.3-codex 6.1M █▋

glm-4.7-free 6.0M █▋

glm-4.7 5.0M █▍

mercury-2 4.3M █▏

deepseek-v4-flash 3.7M █

gpt-5.5 3.6M █

kimi-k2.5 2.0M ▌

starter 1.8M ▌

gpt-5.1-codex 1.5M ▍

kat-coder-pro-v1 1.4M ▍

others (57 models) 9.8M ██▋ aivo update detects whether you installed via Homebrew, npm, or the install script and updates accordingly.

Failed updates are rolled back automatically; a manual rollback is available if a new version misbehaves.

aivo update

# force update even if installed via a package manager

aivo update --force

# restore the previous version from the last backup

aivo update --rollback

AES-256-GCM encrypted at rest in ~/.config/aivo/, with the key derived from your machine. Requests go straight from your machine to the provider.

No. Prompts, responses, and usage stay between your machine and the provider. Aivo runs no servers and collects no telemetry.

Coding agents speak Anthropic, OpenAI, or Gemini protocols. Providers also speak one of the three. Aivo detects both ends and converts request and response shape on the fly — tools, streaming, and reasoning blocks included. There is no per-pair shim, so any new agent that speaks one of the three protocols works with every provider that speaks any of them.

No. The built-in provider works without signup. Bring your own keys (or sign in with a ChatGPT, Claude, or Gemini subscription) when you want a specific provider — that login is between you and them, not aivo.

No. Each subscription's terms restrict use to its own agent, and aivo respects that.